Langsmith

Observability platform for LLM applications, tracking prompts, latency, and costs.

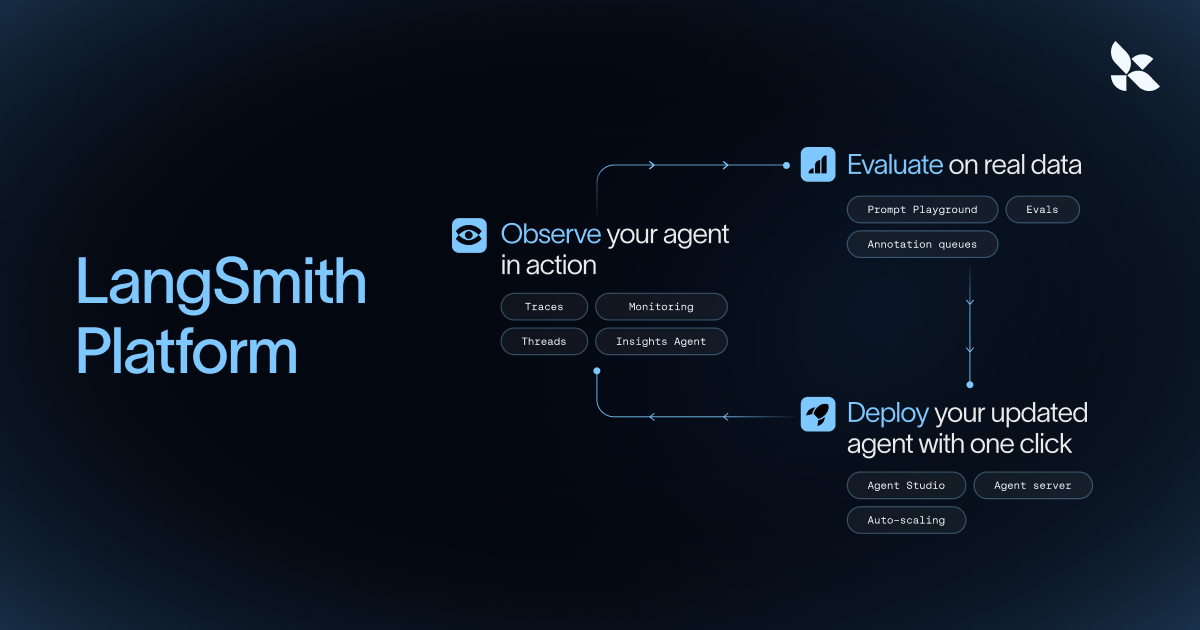

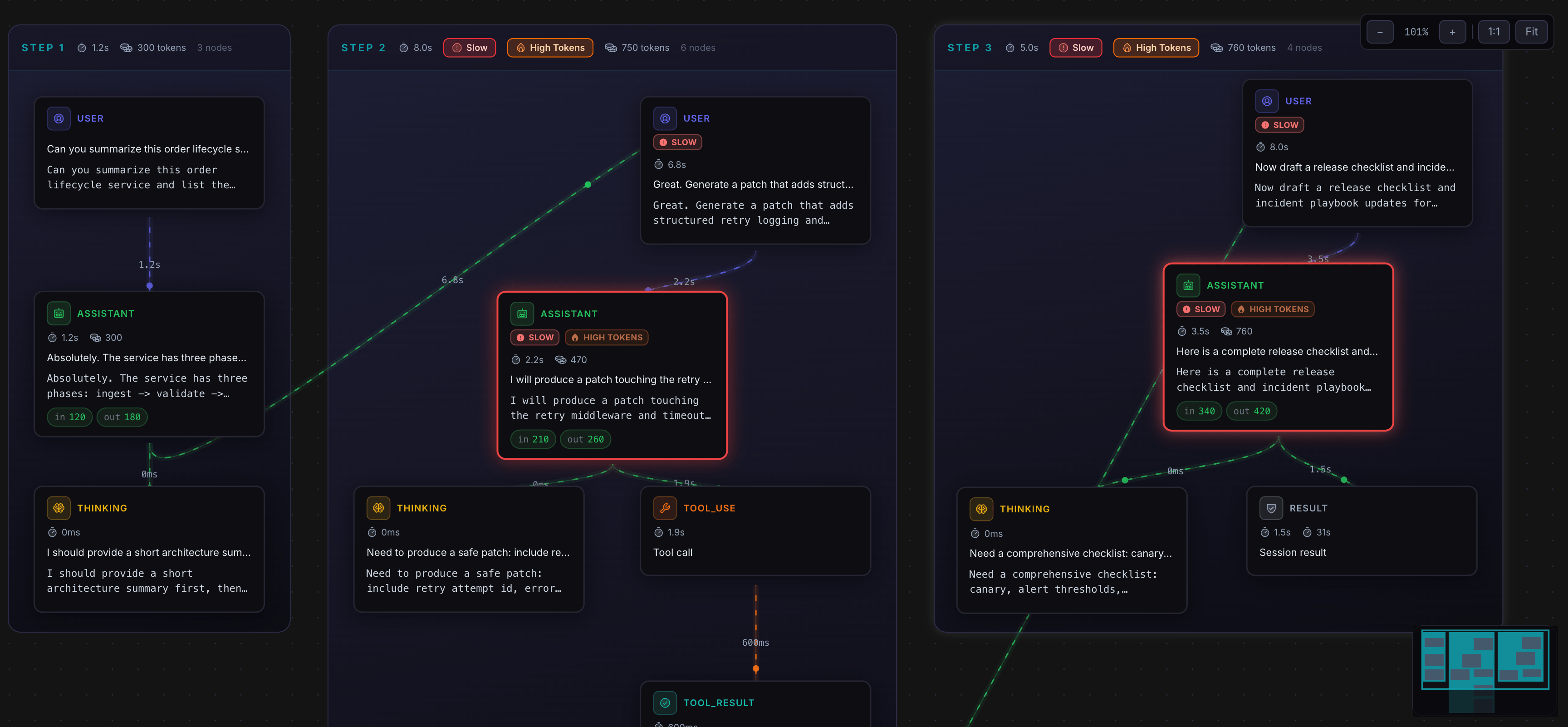

LangSmith provides a framework‑agnostic platform for observing, evaluating, and deploying AI agents and LLM‑driven applications. It captures full execution traces, allowing developers to debug failures by reviewing each step an agent takes, and offers built‑in assistance to summarize large traces. The observability layer also records cost, latency, error rates, and custom qualitative metrics, which can be visualized on dashboards and used to trigger alerts.

The evaluation component lets teams run automated judges, code‑based checks, or multi‑turn assessments on production traces, calibrate judges to human preferences, and compare results across version changes. Subject‑matter experts can annotate traces, enabling collaborative quality improvement and regression prevention before updates reach production.

Deployment features include a managed runtime that supports human‑in‑the‑loop approvals, background processing, and multi‑agent coordination with exactly‑once execution. Agents are registered centrally with versioning, rollbacks, and organization‑wide rollout, while the infrastructure scales horizontally to handle long‑running or bursty workloads.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

LangChain

Open-source framework for building applications powered by language models.

AI Coding Agents

Langfuse

LLM engineering platform for model tracing, prompt management, and application evaluation. Langfuse helps teams collaboratively debug…

AI Coding Agents

AgenticLens

Visual debugging, tracing, and replay for agent workflows

AI Coding Agents

ClawTrace

Make your OpenClaw better, cheaper, and faster

AI Coding Agents

Tracium

Tracium is an AI Evaluation Platform for testing and benchmarking AI model performance.

System Monitoring & Maintenance

AgenSights

Know exactly which AI agent is burning your budget.