lean-ctx

Token-saving context runtime for agents.

lean‑ctx provides a local‑first runtime that intercepts file reads and shell output before they are sent to a large language model, applying mode‑aware compression and caching to reduce token usage. It supports multiple read modes such as full, map, signatures, and diff, and a shell hook that applies over ninety patterns to compress noisy CLI output from tools like git, npm, cargo, and docker. The system also offers a session memory component that persists task facts and decisions across chats, and an HTTP server mode for streamable MCP access.

The runtime is intended for developers building AI coding agents that interact with code repositories and command‑line tools, aiming to lower the token cost of operations in environments such as Cursor, Claude Code, Copilot, Windsurf, Codex, and Gemini. By compressing and caching data, it can cut token waste by 60‑95 % and up to 99 % on cached reads, with re‑reads consuming roughly thirteen tokens.

Implementation is a single Rust binary available via a universal installer, Homebrew, npm, and Arch Linux packages. It exposes a set of `ctx_*` tools through an MCP server, integrates with shell commands via a transparent hook, and provides a programmable HTTP endpoint for tool calls, enabling developers to incorporate the context layer into their AI workflows with minimal setup.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

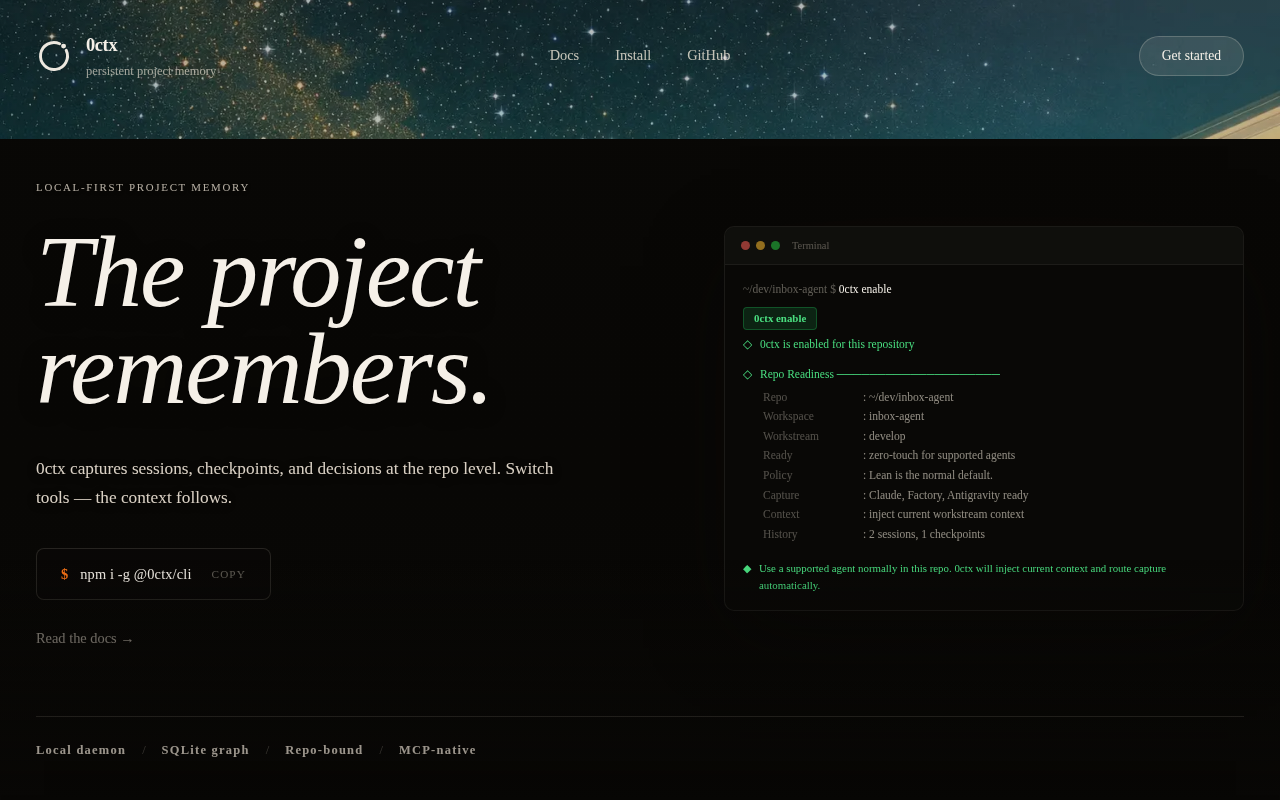

0ctx

Persistent repo memory for AI coding tools

AI Coding Agents

Codex

OpenAI's coding agent that runs in your terminal

AI Coding Agents

Vexp

Local-first context engine for AI coding agents

AI Coding Agents

Engram - MCP Server for AI Dev Agents

Stop burning tokens. Make your agents up to 6x faster.

AI Coding Agents

agent-deck

Dashboard for managing multiple AI coding agent sessions.

AI Coding Agents

Aider

A terminal-based coding assistant that helps you write, modify and understand code through natural language conversations with AI models