LocalAI

Run your AI models locally and generate images and audio (alternative to OpenAI and Claude).

LocalAI provides an open‑source engine that runs a wide range of AI models—including large language models, vision, voice, image, and video generators—directly on local hardware. It supports more than thirty inference backends such as llama.cpp, vLLM, transformers, whisper, and diffusers, and works on CPUs as well as GPUs from NVIDIA, AMD, Intel, and Apple Silicon. The service offers a drop‑in API compatible with OpenAI, Anthropic, and ElevenLabs, allowing existing applications to switch to a self‑hosted, privacy‑first solution without code changes.

The platform includes built‑in support for autonomous agents, role‑based access control, API‑key authentication, and per‑user quotas, making it suitable for multi‑user environments. Optional extensions add semantic search and memory management, enabling more complex document‑intelligence workflows. All components are released under the MIT license and can be deployed via binaries, Docker, Podman, or Kubernetes, with no subscription required.

LocalAI targets developers and organizations that need to run AI workloads locally for cost, latency, or data‑privacy reasons. Its modular design lets users start with a basic OpenAI‑compatible endpoint and incrementally add agentic capabilities or retrieval‑augmented features while keeping the entire stack on‑premises.

Reviews

Loading reviews…

Similar apps

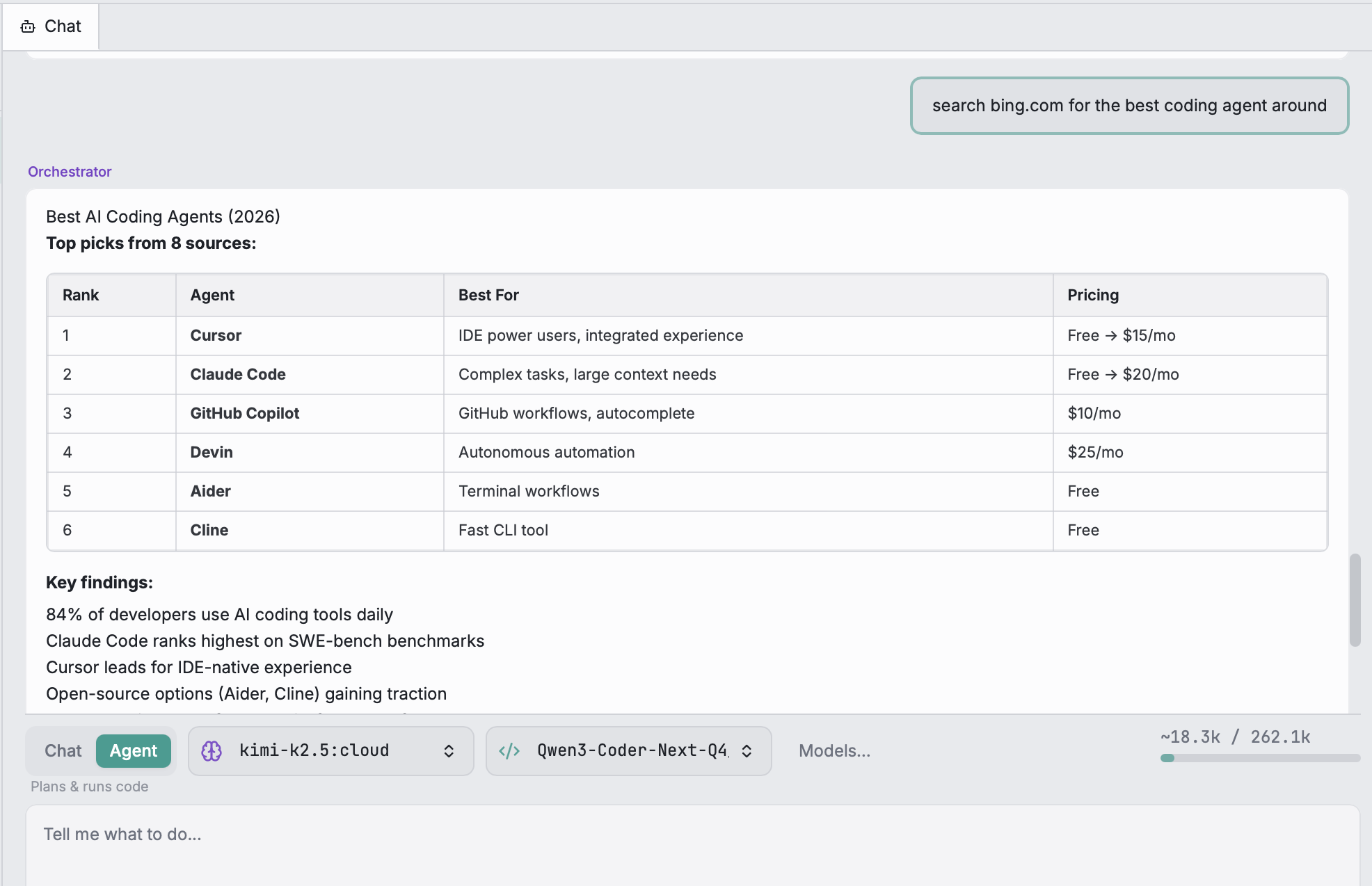

AI Coding Agents

Open-WebUI

User-friendly AI Interface, supports Ollama, OpenAI API.

AI Coding Agents

LobeHub

AI chat framework

AI Coding Agents

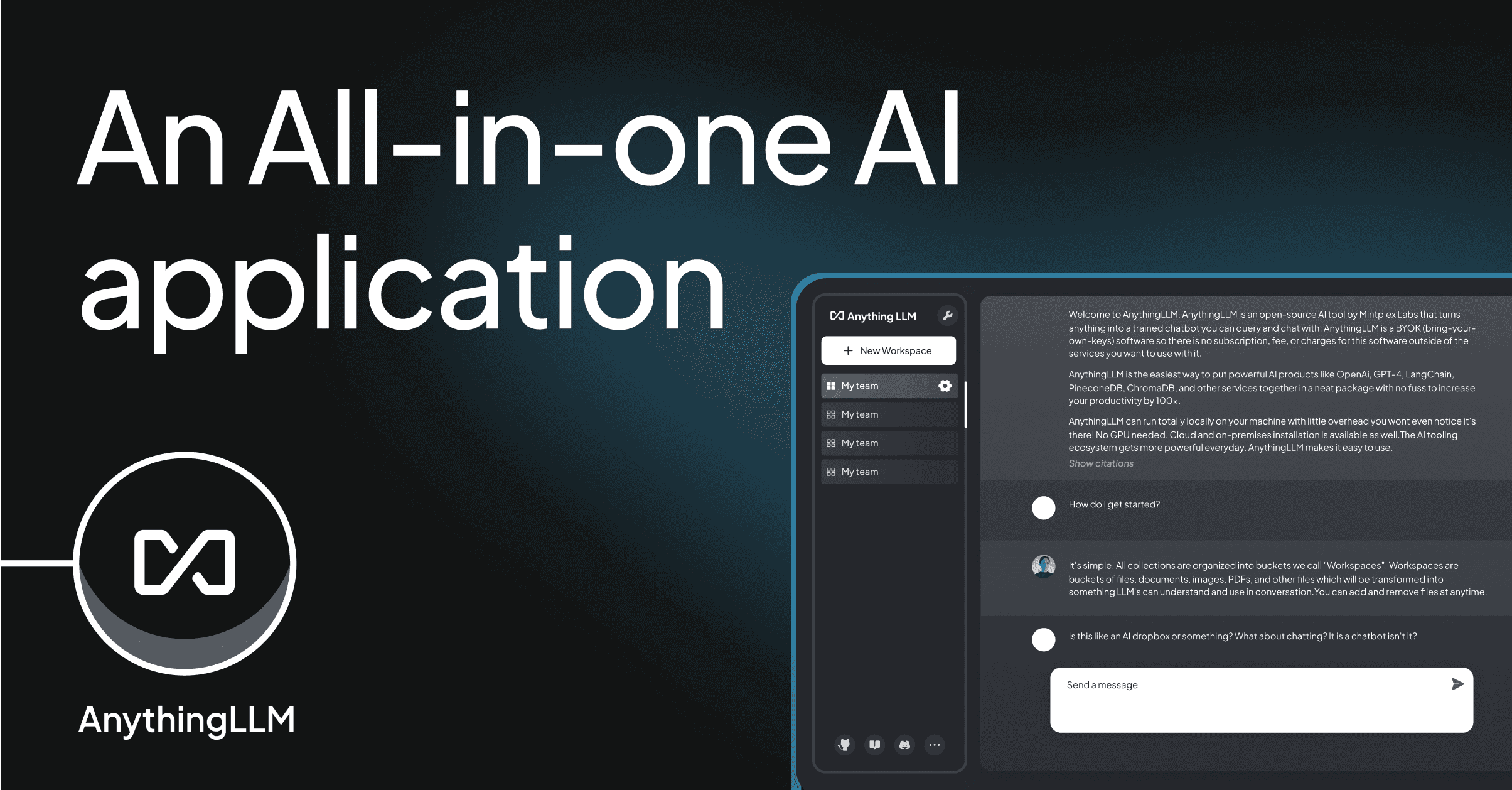

AnythingLLM

All-in-one desktop & Docker AI application with built-in RAG, AI agents, No-code agent builder, MCP compatibility, and more.

AI Coding Agents

LobeHub

Modern design AI chat framework supporting multiple AI providers, one click install MCP Marketplace and Artifacts / Thinking.

AI Coding Agents

Dify.ai

Build, test and deploy LLM applications.

AI Coding Agents

Code Scout – Free Local AI Coding Agent

Local AI coding agent. Zero API costs. Code stays yours