Arize Phoenix

Open-source platform for LLM tracing, evaluation, and optimization. Features automatic instrumentation, prompt playground, and real-time AI application monitoring.

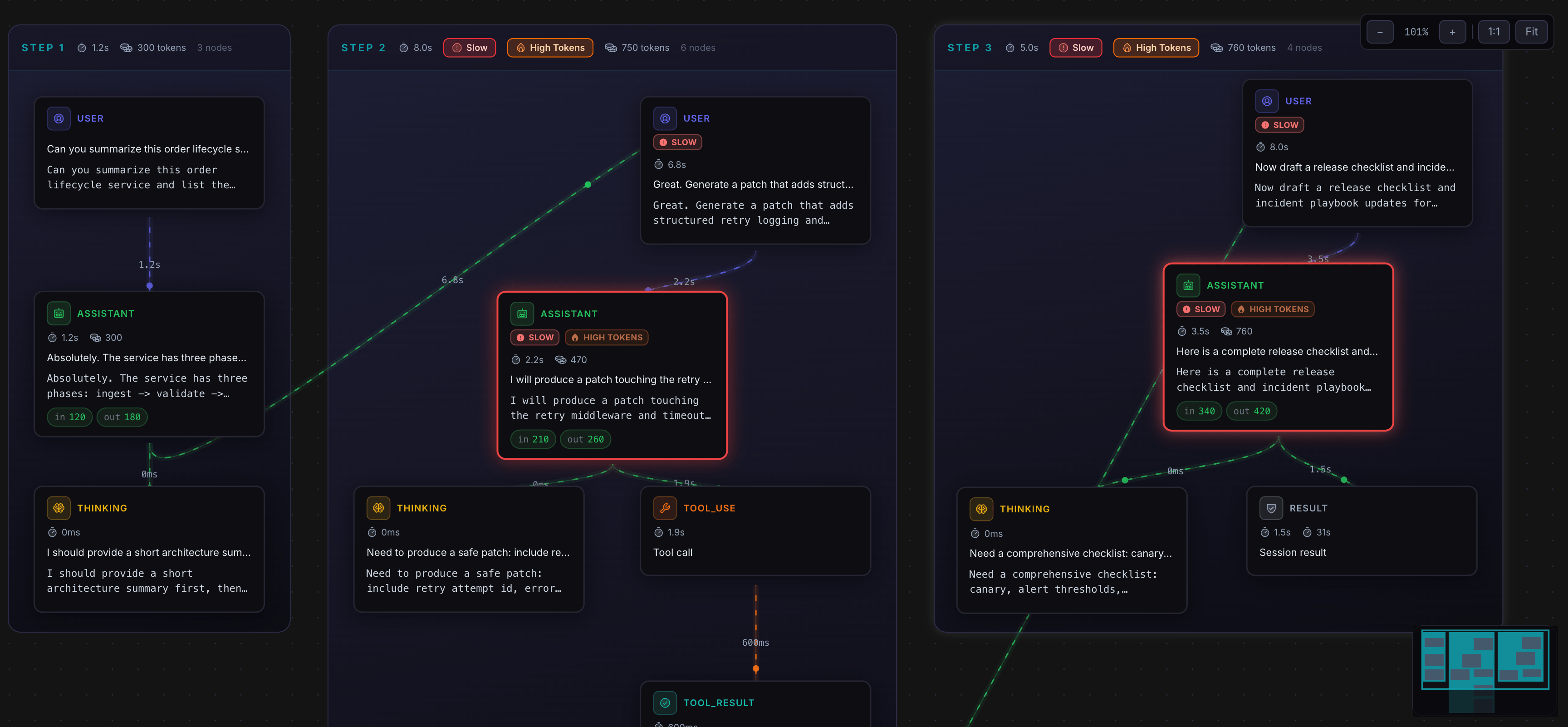

Arize Phoenix provides an open‑source platform for tracing, evaluating, and optimizing large language models (LLMs). It automatically instruments LLM calls, captures request and response data, and stores the information for later analysis. The system includes a prompt playground where users can experiment with different prompts and view the resulting model behavior in real time.

The platform offers real‑time monitoring of AI applications, presenting metrics such as latency, token usage, and error rates through dashboards. It supports integration with Python and Conda environments, is available as a Docker image, and can be deployed via Helm charts. Documentation, community channels, and versioned releases are linked from the project homepage.

Designed for developers and data scientists who need visibility into LLM performance, Phoenix helps identify issues, compare model variants, and guide optimization efforts without requiring manual instrumentation of each model call.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

Langfuse

LLM engineering platform for model tracing, prompt management, and application evaluation. Langfuse helps teams collaboratively debug…

AI Coding Agents

Opik

Evaluate, test, and ship LLM applications with a suite of observability tools to calibrate language model outputs across your dev and…

AI Coding Agents

Agenta

LLMOps platform for prompt management, LLM evaluation, and observability. Build, evaluate, and monitor production-grade LLM applications…

AI Coding Agents

Agno

Open-source platform that enables developers to create, deploy and monitor AI agents with built-in memory, knowledge integration, and…

AI Coding Agents

Langsmith

Observability platform for LLM applications, tracking prompts, latency, and costs.

AI Coding Agents

AgenticLens

Visual debugging, tracing, and replay for agent workflows