Agent TARS

Multimodal AI agent for GUI interaction

The stack offers a multimodal AI agent that can interact with graphical user interfaces, terminals, browsers, and other applications. It combines vision and large‑language‑model capabilities to interpret screen content and issue commands, aiming to complete tasks in a way that resembles human workflow. Users operate the agent through a command‑line interface or a web‑based UI, and the system integrates with a variety of real‑world toolkits for extended functionality.

A companion desktop application provides a native graphical interface powered by the UI‑TARS model. It supports both local and remote operators for computers and browsers, allowing the agent to control devices without additional configuration. Recent releases add streaming support for multiple tools, timing statistics, event‑stream debugging, and an isolated sandbox for safe execution of external commands.

The project targets developers and power users who need automated assistance for repetitive or complex GUI tasks on macOS. Its distinctive aspects are the blend of vision‑guided interaction, multimodal language processing, and seamless integration with command‑line, web, and desktop environments.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

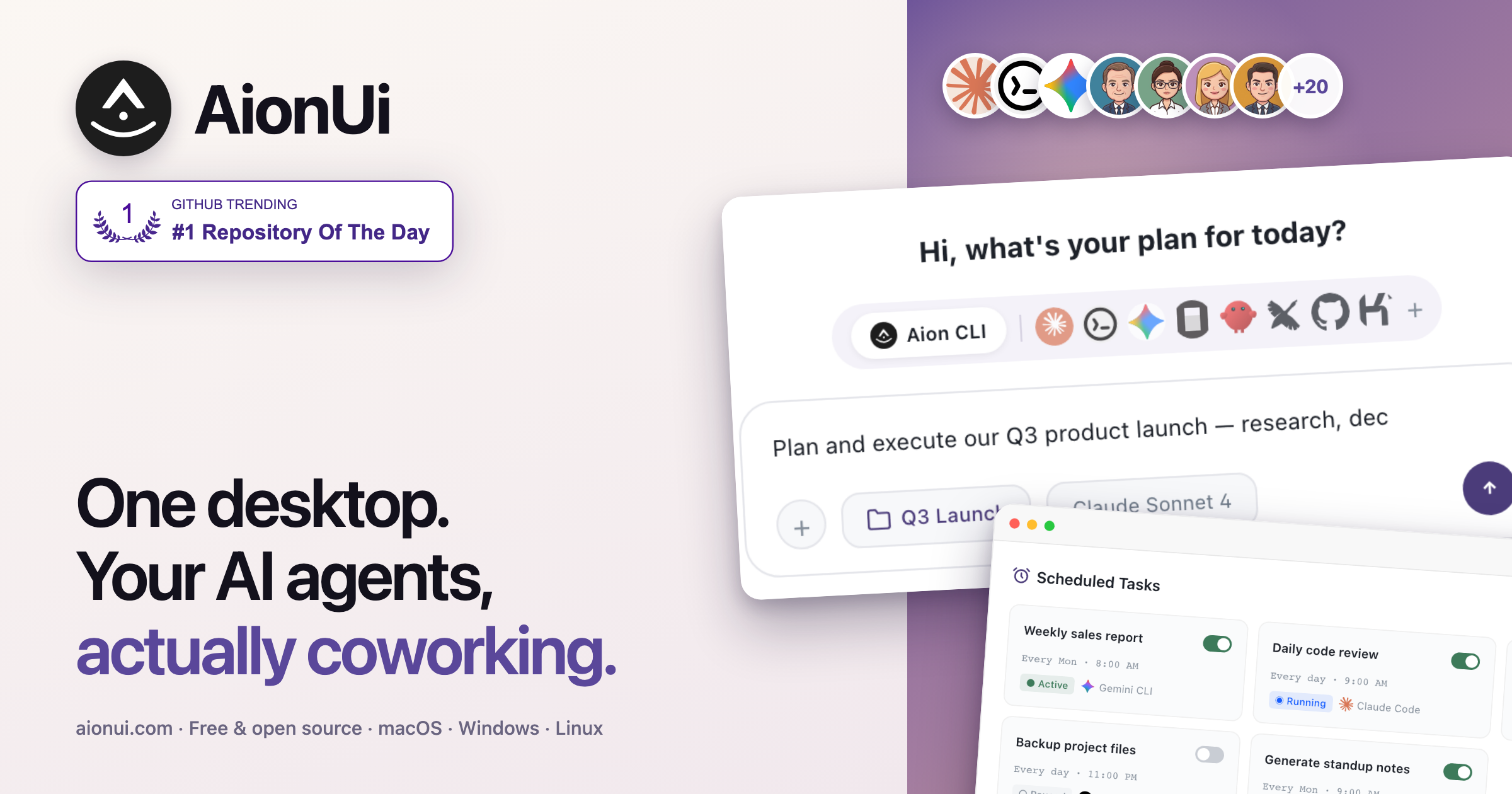

AionUi

Unified GUI for command-line AI agents

AI Coding Agents

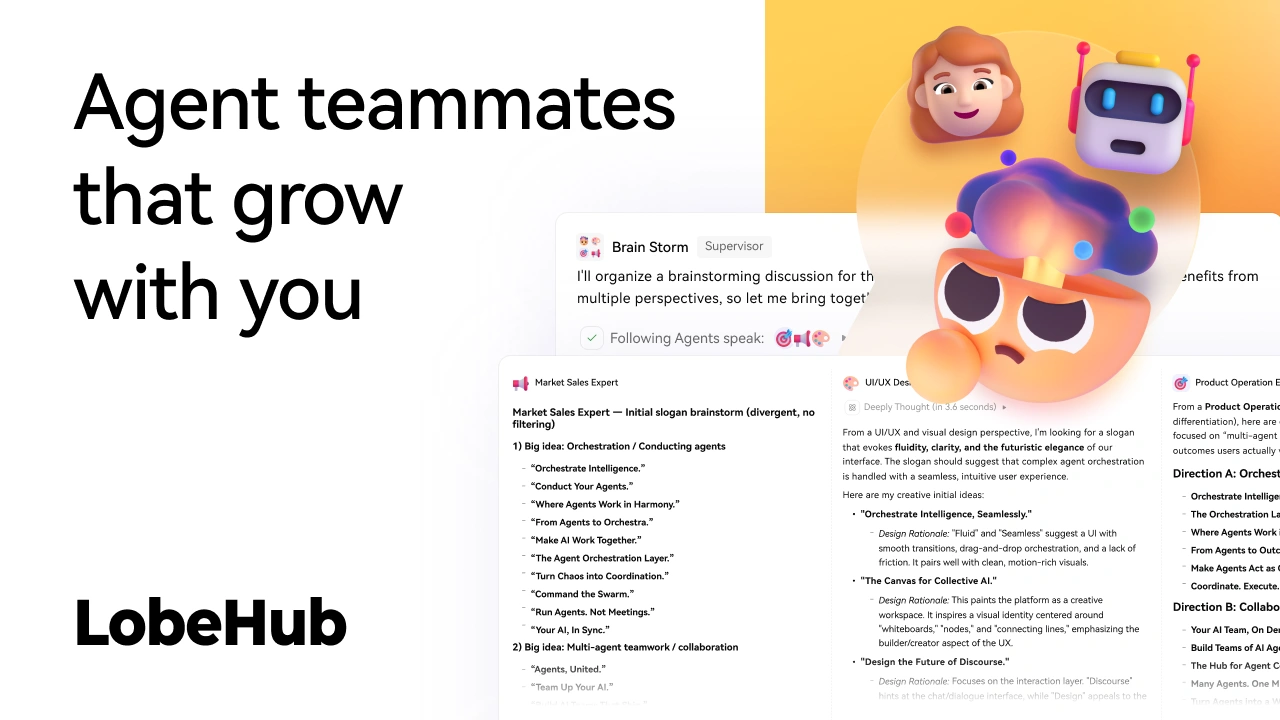

LobeHub

AI chat framework

AI Coding Agents

AiderDesk

Desktop GUI for Aider AI pair programming

AI Coding Agents

Hive

AI agent orchestrator for parallel coding across projects

AI Agents & Automation

Craft Agents

AI assistant for connecting and working across data sources

AI Agents & Automation

BrowserOS

Open-source agentic browser