LlamaChat

Client for LLaMA models

LlamaChat is a macOS client that lets users run and interact with a variety of LLaMA‑based large language models locally on their computers. It supports the original LLaMA models as well as fine‑tuned variants such as Alpaca, GPT‑4All, and Vicuna, enabling a chatbot‑style conversational experience without requiring cloud services. The application can import raw PyTorch checkpoints directly or use pre‑converted .ggml files, handling model conversion through the open‑source llama.cpp and llama.swift libraries.

The software is targeted at developers, researchers, and hobbyists who want to experiment with or deploy LLaMA‑derived models on a Mac, including both Intel and Apple Silicon hardware. Because it runs entirely offline, it is suitable for users concerned with data privacy or who need to work in environments without internet access.

LlamaChat is distributed as a free, open‑source package under a stable release, and it can be installed via a direct download or Homebrew. It does not include any model files; users must obtain and integrate models in accordance with the providers’ terms. The project is maintained independently of the original model creators.

Reviews

Loading reviews…

Similar apps

Window & Desktop Management

LlamaBarn

Menu bar app for running local LLMs

Window & Desktop Management

LocalChatAI

Private, Offline AI Chatbot powered by Apple Intelligence.

AI Chat & Voice Agents

HuggingChat

Chat client for models on HuggingFace

AI Agents & Automation

Ollamac

Interact with Ollama models

Window & Desktop Management

MindMac

AI chat client for multiple providers in one place.

AI Chat & Voice Agents

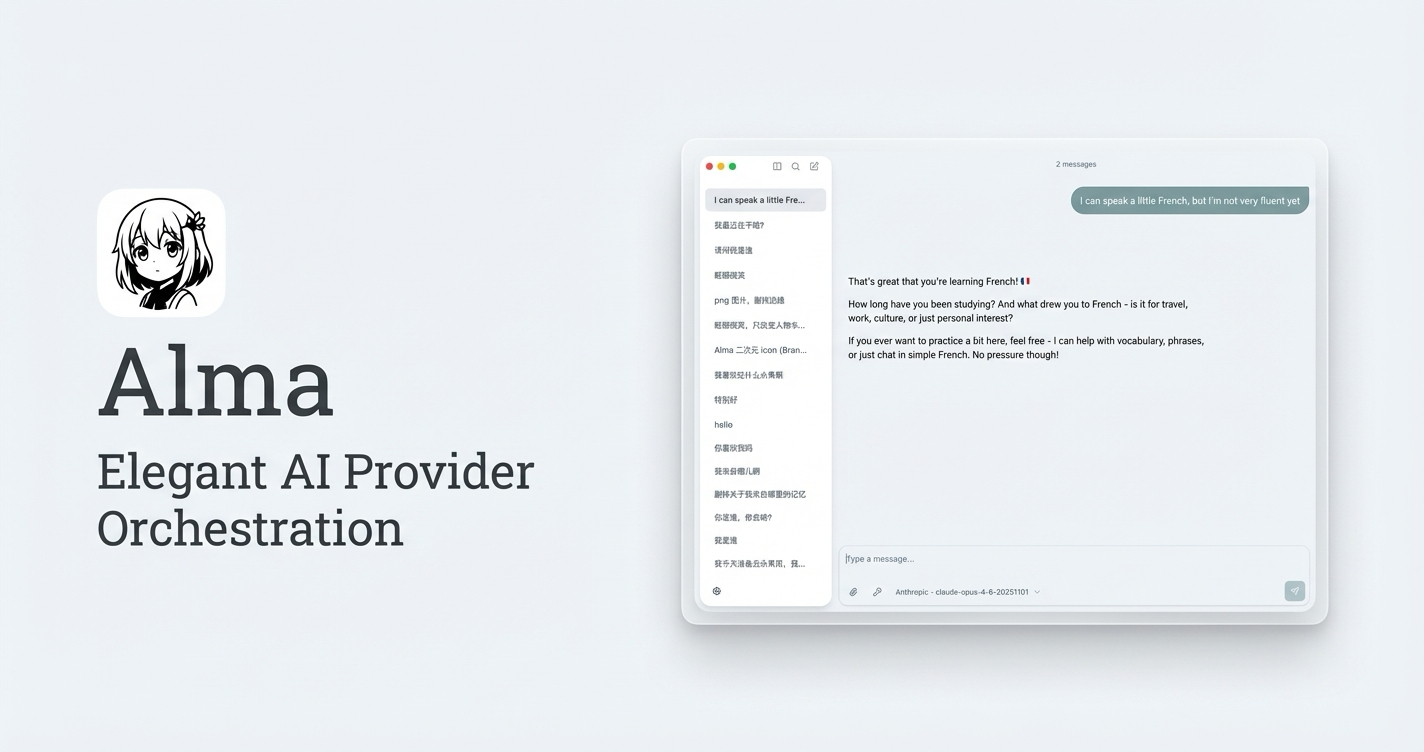

Alma

AI chat application